Coming in 2020: Major Dataset Update to nuScenes by Aptiv

As we look back on 2019, we are proud to have led a groundbreaking year for self-driving research and industry collaboration. In March, Aptiv released nuScenes, the industry’s first large-scale public dataset to provide information from a comprehensive autonomous vehicle (AV) sensor suite. In publishing an open-source AV dataset of this caliber, we knew that we were solving for a gap in our industry, which has historically limited making data available for research purposes. Our objective in releasing nuScenes by Aptiv was to enable the future of safe mobility through more robust industry research, data transparency and public trust.

The reception far and wide exceeded our expectations. Within the first months of releasing nuScenes, we saw thousands of users and hundreds of top academic institutions download and use the dataset. Not long after, we were excited to see other industry leaders -- including Lyft, Waymo, Hesai, Argo, and Zoox -- follow suit with their own datasets. At the same time we began receiving requests from notable self-driving developers asking to license nuScenes, so that they could use the data to improve on their own autonomous driving systems. It’s this type of groundbreaking collaboration and information sharing that supports our industry’s ‘safety first’ mission.

Today, we are proud to announce the next big step for Aptiv’s open-source data initiative. In the first half of 2020 our team will release two major updates to nuScenes: nuScenes-lidarseg and nuScenes-images. Commercial licensing will be available for both datasets.

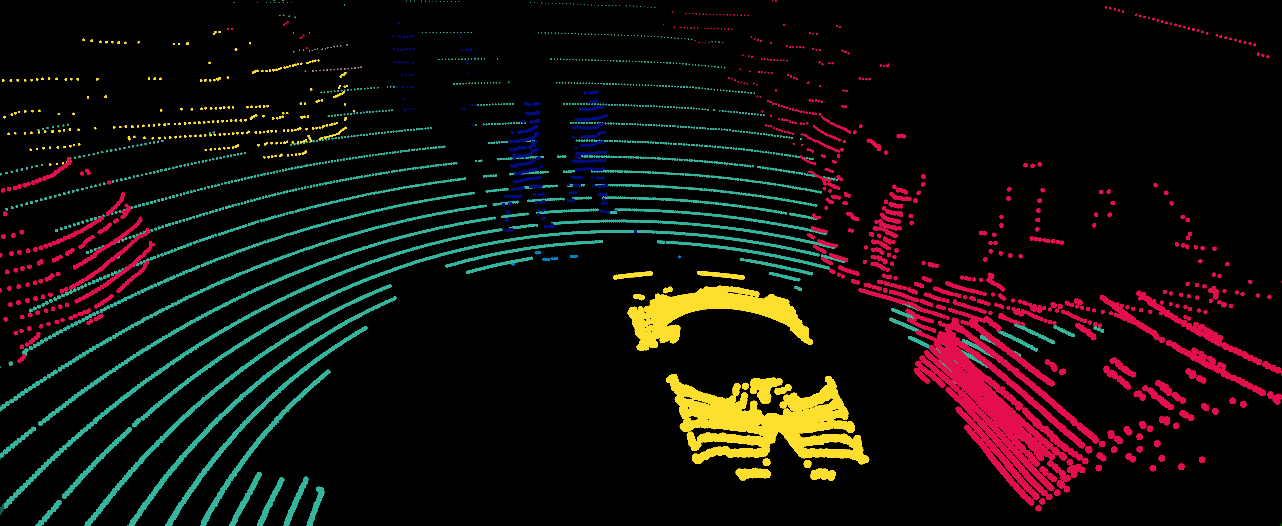

nuScenes-lidarseg

In the original nuScenes release, cuboids (also known as bounding boxes) are used to represent 3D object detection. While this is a useful representation in many cases, cuboids lack the ability to capture fine shape details of articulated objects. As self-driving technology continues to advance, vehicles need to pick up on as much detailed information of the outside world as possible. For example, if a pedestrian or cyclist is using arm signals to communicate with other road users, the vehicle needs to recognize this and respond correctly.

To achieve such levels of granularity, we released nuScenes-lidarseg. This dataset contains annotations for every single lidar point in the 40,000 keyframes of the nuScenes dataset with a semantic label -- an astonishing 1,400,000,000 lidar points annotated with one of 38 labels.

This is a major step forward for nuScenes, Aptiv, and our industry’s open-source initiative, as it allows researchers to study and quantify novel problems such as lidar point cloud segmentation, foreground extraction and sensor calibration using semantics.

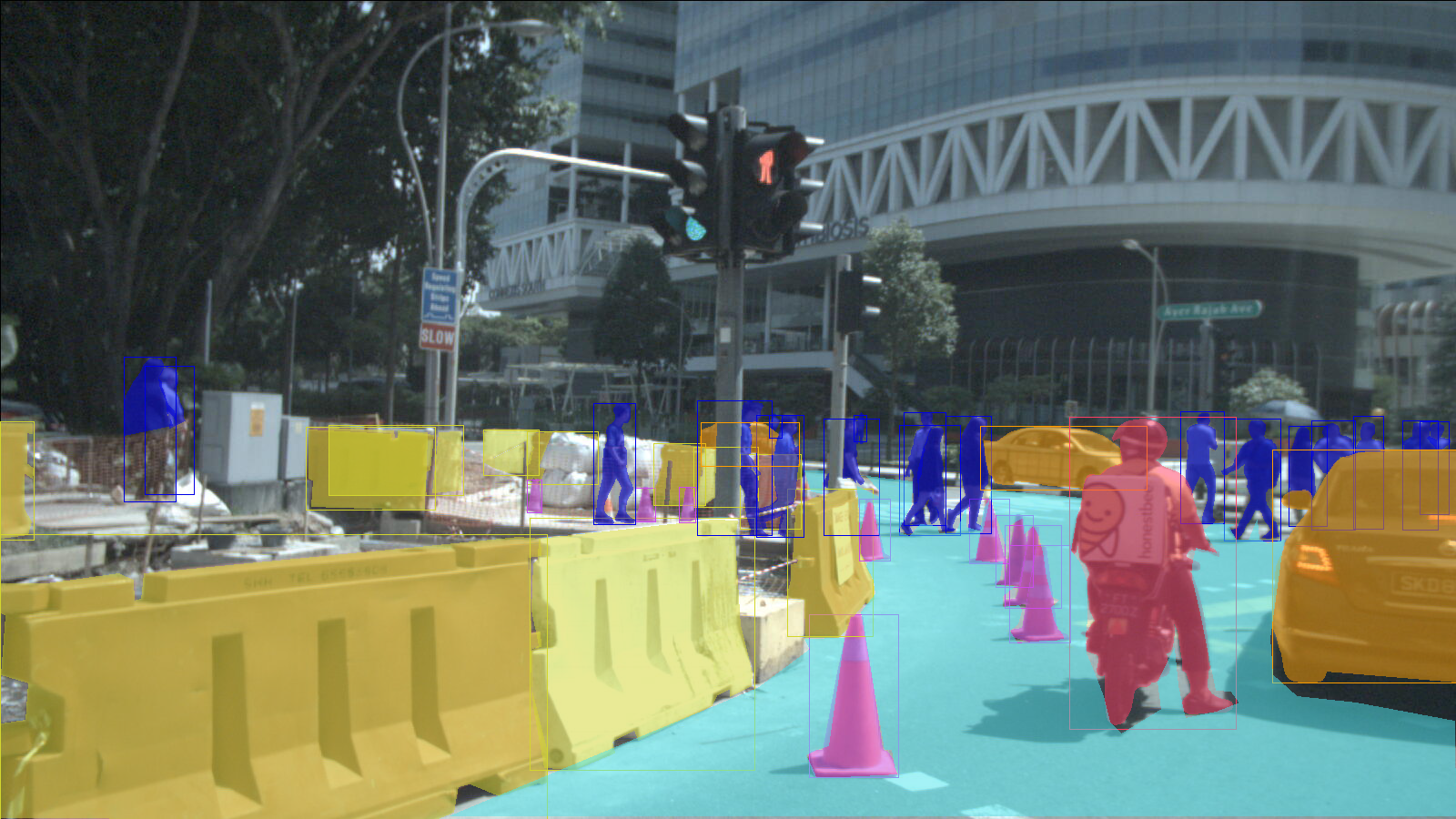

nuScenes-images

Leading scene understanding algorithms on common object classes, like cars, is one thing -- but it’s a well-known problem that these algorithms do not perform well on rare classes (tricycles or emergency vehicles, for example). Furthermore, Level 5 self-driving cars will require vehicles to operate in varying environments, including rain, snow and at night.

To collect a more sophisticated dataset that could successfully respond to edge cases or unfavorable driving conditions, we deployed data-mining algorithms on a large volume of collected data to select interesting images.

Our ambition is that the resulting nuScenes-images dataset will allow self-driving cars to operate safely in challenging and unpredictable scenarios.

nuScenes-images will be comprised of 100,000 images annotated with over 800,000 2D bounding boxes and instance segmentation masks for objects like cars, pedestrians and bikes; and 2D segmentation masks for background classes such as drivable surface. The taxonomy is compatible with the rest of nuScenes enabling a wide range of research across multiple sensor modalities.

The new data content will be released in the first half of 2020. To learn more about commercial licensing for nuScenes contact us at nuScenes@nutonomy.com or visit us at nuScenes.org