Motional Builds Radars Into Perception System

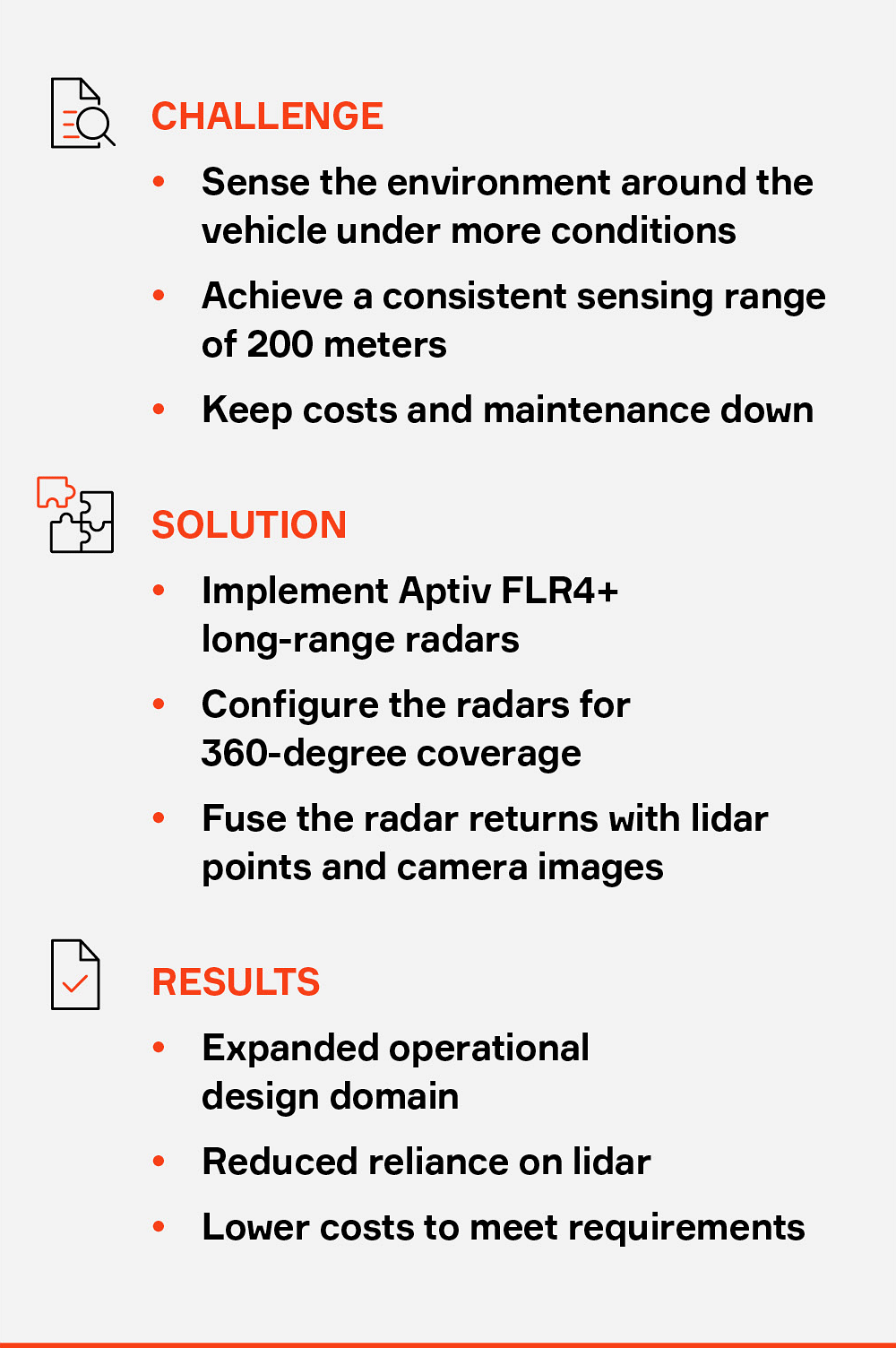

Driverless-vehicle maker Motional uses an array of high-performance radars to create a more complete model of the environment around a vehicle in a cost-effective manner that maintains high performance standards.

Motional develops and deploys autonomous vehicles for ride-hailing and delivery services. Because its vehicles operate at Level 4 — fully autonomous driving — they require extremely robust sensing and perception capabilities. To make the most informed driving decisions possible, the vehicles must have a very clear sense of what objects are around them, and how fast and in what direction they are moving.

Stretching further

Traditionally, lidar has provided much of the object detection data for highly autonomous vehicles. However, Motional was looking to expand the range of its vehicles’ sensors while achieving higher reliability and ensuring high performance during adverse weather conditions — all of which are radar’s strengths.

Plus, lidar costs five to 10 times as much as radar.

Lidar systems use pulsed lasers to detect objects and their distance from a vehicle. The lasers require line of sight to make those detections, which can be hampered by rain, snow or fog. Motional was also looking to leverage sensors with fewer moving parts to keep maintenance costs down. Radar uses solid-state electronics, which have no moving parts. While the industry continues to make progress on solid-state lidar, there is not yet a suitable alternative that meets Motional’s demanding requirements.

But perhaps the biggest requirement for Motional is extending its vehicles’ sensing range. Its vehicles need to be able to detect objects with high fidelity at longer distances than lidar can provide.

Motional continues to employ long- and short-range lidar sensors so that the vehicle can clearly detect the shapes of objects that are within their range in fair weather conditions, but it complements those devices with twice as many radars.

Motional uses Aptiv FLR4+ long-range radars in a configuration that provides 360-degree coverage around the vehicle. In contrast, a vehicle that operates at Level 2 or Level 3 might include a single long-range radar in the front, with short-range radars providing coverage from each corner.

The vehicle uses a neural net to perform sensor fusion between the lidar point cloud and the radar point cloud, which enables it to preserve more data than if each point cloud were processed separately. The vehicle then further fuses that data with images gathered through an array of cameras, using a technique called point painting, which projects the merged point cloud onto the camera images.

A better view

The radars provide a more complete picture of what is going on around the vehicle. The 360-degree coverage allows the vehicle to see behind itself for any overtaking traffic, which is especially important for lane changes. The radars are able to provide returns from 200 meters away from the vehicle, including the distance and speed of any objects.

The automotive-grade radars also expand the operational design domain, working more reliably in rain, snow and fog. Combined with lidar sensors, they are able to provide a more complete picture in low light conditions, when cameras are more challenged.

Overall, the radars help the system to be more confident in its assessment of the environment, which in turn allows it to take safe driving actions with higher confidence.

A joint venture between Aptiv and Hyundai Motor Group, Motional is working with Aptiv to continue to improve the AI and machine learning capabilities of radar, enabling the system to use radar to more accurately classify objects as vehicles, pedestrians, bicyclists or something else.

Motional is looking to employ radar for localization in the future, to help a vehicle establish exactly where it is on a high-definition map. Today, this function is carried out by lidar.

The end result is that radar is helping Motional provide autonomous vehicles with smoother, more confident movements that will yield a better mobility experience for passengers.